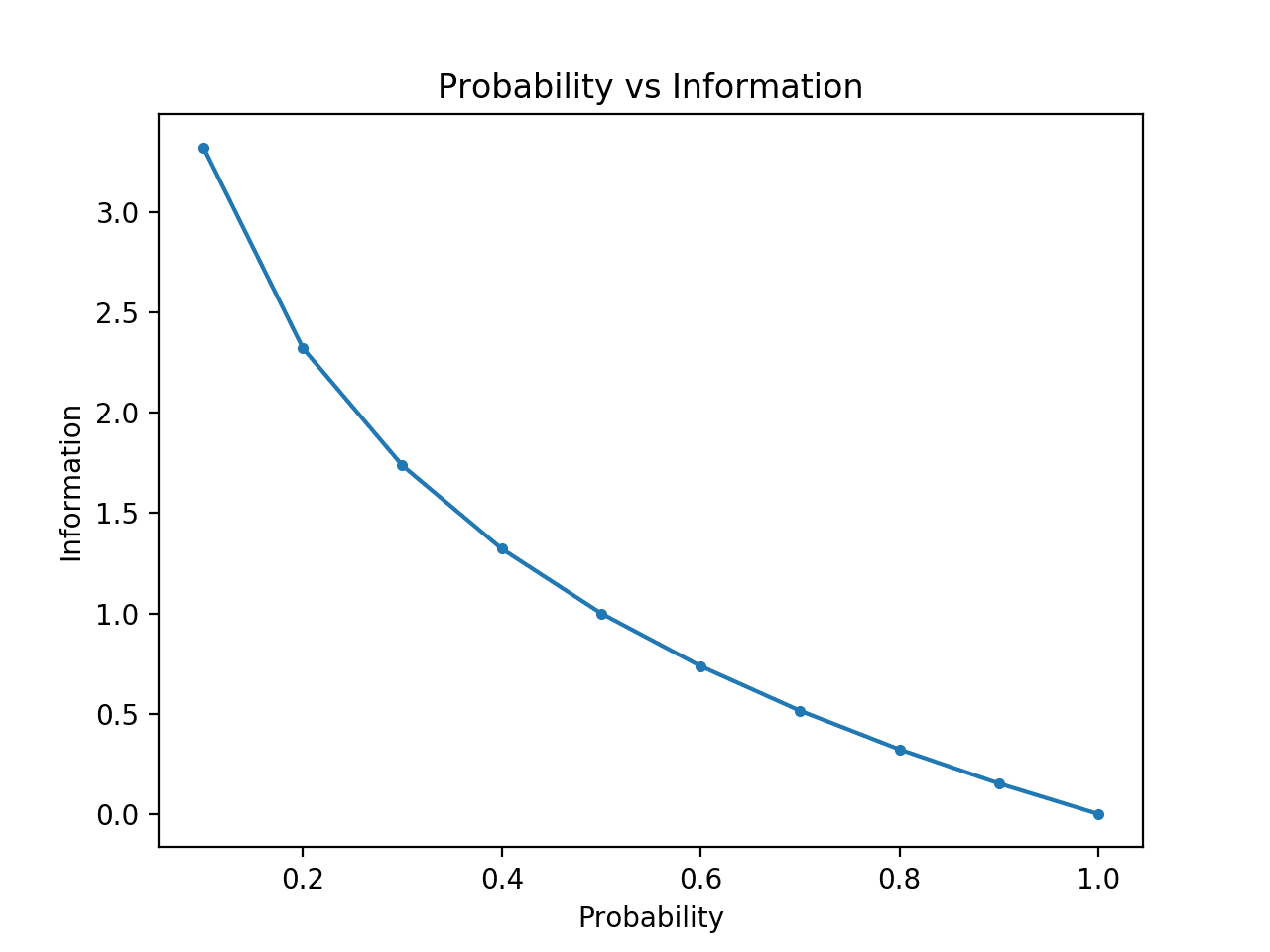

The higher the entropy of a random variable, the closer that random variable is to having all of its outcomes being equally likely. Entropy as a measure of uniformness: The first angle views entropy as a degree of uniformness of a random variable.In the next two subsections we’ll discuss two angles from which to view information entropy: Intuitively, since self-information describes the degree of surprise of an event, the entropy of a random variable tells us, on average, how surprised we are going to be by the outcome of the random variable. Said differently, the entropy of $X$ is simply the average self-information over all of the possible outcomes of $X$. Given a random variable $X$, the entropy of $X$, denoted $H(X)$ is simply the expected self-information over its outcomes:\ = -\sum_$ is the codomain of $X$. The concept of information entropy extends this idea to discrete random variables. Given an event within a probability space, self-information describes the information content inherent in that event occuring. In turn, this discussion will explain how the base of the logarithm used in $I$ corresponds to the number of symbols that we are using in a hypothetical scenario in which we wish to communicate random events. It is within this context that the concept of information entropy begins to materialize. Why is that? In this post, we will place the idea of “information as surprise” within a context that involves communicating surprising events between two agents.

One strange thing about this equation is that it seems to change with respect to the base of the logarithm. Thus, in information theory, information is a function, $I$, called self-information, that operates on probability values $p \in $:\

We discussed how surprise intuitively should correspond to probability in that an event with low probability elicits more surprise because it is unlikely to occur. In the first post of this series, we discussed how Shannon’s Information Theory defines the information content of an event as the degree of surprise that an agent experiences when the event occurs. In this second post, we will build on this foundation to discuss the concept of information entropy. In the first post, we discussed the concept of self-information. In this series of posts, I will attempt to describe my understanding of how, both philosophically and mathematically, information theory defines the polymorphic, and often amorphous, concept of information. The mathematical field of information theory attempts to mathematically describe the concept of “information”. Information entropy (Foundations of information theory: Part 2)

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed